This Project Taught Me a Lot!

While building Relio, I learned many new things.

Before this project, I mostly built very basic websites and mobile applications with simple functionality. Many of them were just GPT wrappers. This project pushed me to the next level, where I worked with Redis Streams, background workers, and distributed systems.

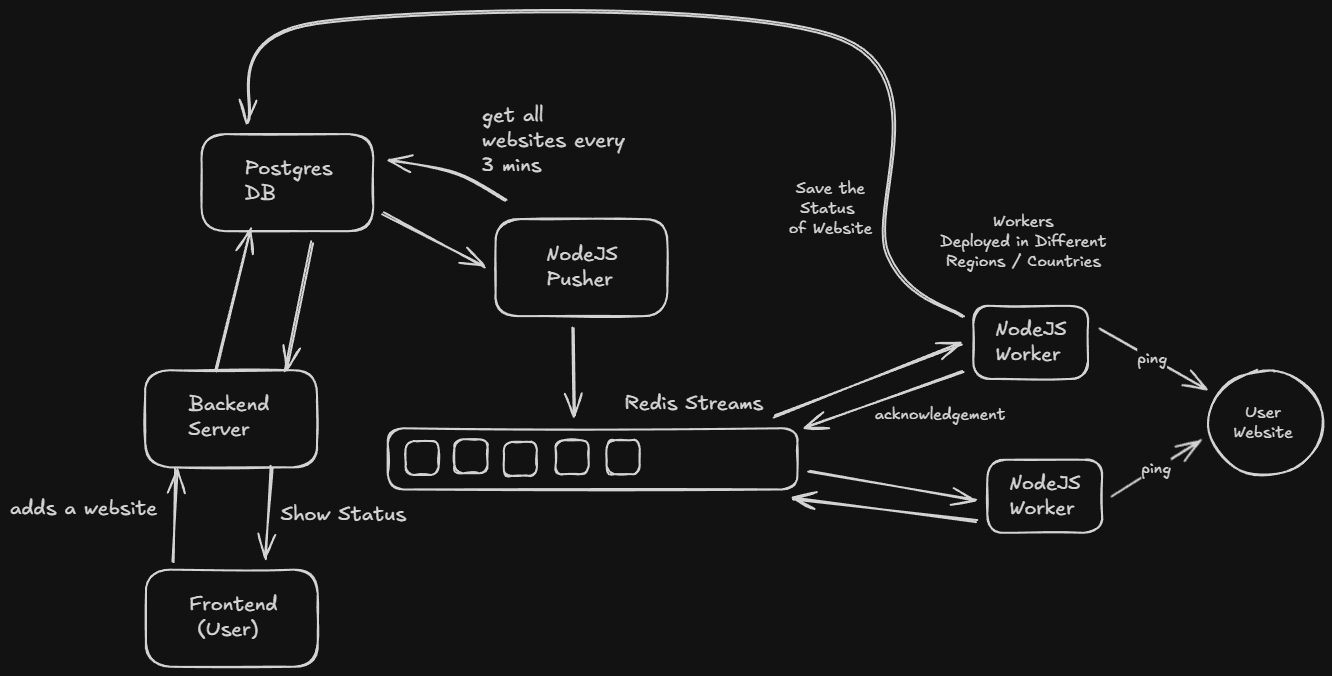

The idea of the project is simple. You add your website link, and your website is pinged every 3 minutes from different countries. If any incident occurs or your website goes down, you get notified. Along with that, you also get website speed metrics and detailed uptime history.

How does this work?

When a user adds a website link, it is stored in a PostgreSQL database. A process running on the server, called the Pusher, regularly fetches all websites from the database and pushes them into Redis Streams.

Redis Streams has consumer groups for different regions. Workers deployed in multiple regions subscribe to these consumer groups. Each worker, running as a Node.js or Bun process, pulls a website from the stream and checks its status and response time.

After checking the website, the worker stores the status and speed data in the database. Ideally, this result handling could be moved to a separate queue for better scalability.

Why Redis Streams?

Redis Streams provides features like consumer groups, which help divide website checks across different regions. Once a worker completes a task, it sends an acknowledgement back to Redis. Only after this acknowledgement is the task removed from the stream.

If a worker fails to process a task, Redis ensures that the task can be retried by another worker. This makes the system more reliable and fault tolerant and no check is missed.

and yeah that's pretty much of it....

- Abhay